Definition of catbouncemax (shortened form of “maximum catbounce”): for any particular cat, its catbouncemax is equal to the takeoff kinetic energy of that cat if it suddenly and unexpectedly finds itself face-to-face with an adult copperhead snake.

I’ve actually seen this happen. Really. The cat reached a height I estimate as 1.4 meters.

Measured in joules, a cat’s catbouncemax can most easily be approximated by observing and estimating the maximum height of the cat under these conditions. For ethical and safety reasons, of course, one must simply be observant, and wait for this to happen. Deliberately introducing cats and copperheads (or other dangerous animals) to each other is specifically NOT recommended. Staying away from copperheads, on the other hand, IS recommended. Good science requires patience!

After the waiting is over (be prepared to wait for years), and the cat’s maximum height h, in meters, has been estimated, the cat’s catbouncemax can then be determined by energy conservation, since its takeoff kinetic energy (formerly stored as feline potential energy, until the moment the cat spots the copperhead) is equal to the gravitational potential energy (PE = mgh) of the cat at the top of the parabolic arc. In the catbounce I witnessed, the cat who encountered a copperhead (while walking through tall grass, which is why the cat didn’t see the snake coming) was a big cat, at an estimated mass of 6.0 kg. His catbouncemax was therefore, by energy conservation, equal to mgh = (6.0 kg)(1.4 m)(9.81 m/s²) = ~82 joules, which means this particular cat had 82 J of ophidiofeline potential energy stored, specifically for use in the event of an encounter with a large, adult copperhead, or other animal (there aren’t many) with the ability to scare this cat equally as much as such a copperhead. (I’m using a copperhead in this account for one reason: that’s the type of animal which initiated the highest catbounce I have ever witnessed, and I seriously doubt that this particular cat could jump any higher than 1.4 m, under any circumstances.)

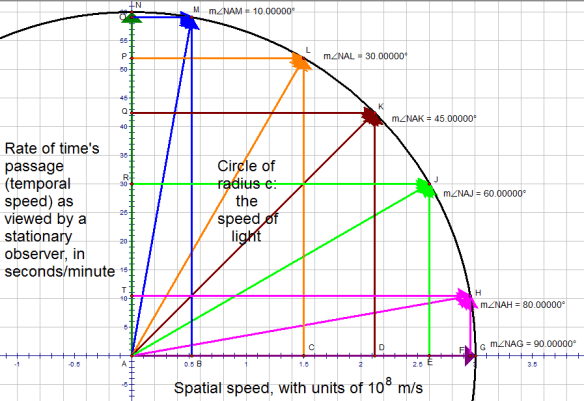

It should be noted that the horizontal distance covered by a catbounce is not needed to calculate a cat’s catbouncemax. However, this horizontal distance will not be zero, as is apparent in the diagram above. Why? Simple: cats don’t jump straight up in reaction to copperheads, for they are smart enough not to want to fall right back down on top of such a snake.

It is more common, of course, for cats to jump away from scary things which are less scary than adult copperheads. For example, there certainly exist centipedes which are large enough to scare a cat, causing it to catbounce, but with that centipede-induced catbounce being less than its catbouncemax. The following fictional dialogue demonstrates how such lesser catbounces can be most easily described. (Side note: this dialogue is set in Arkansas, where we have cats and copperheads, and where I witnessed the copperhead-induced maximum catbounce described above.)

She: Did you see that cat jump?!?

He: Yep! Must be something scary, over there in that there flowerpatch, for Cinnamon to jump that high. At least I know it’s not a copperhead, though.

She: A copperhead? How do you know that?

He: Oh, that was quite a jump, dear, but a real copperhead would give that cat of yours an even higher catbounce than that! The catbounce we just saw was no more than 75% of Cinnamon’s catbouncemax, and that’s being generous.

She: Well, what IS in the flowerpatch? Something sure scared poor Cinnamon! Go check, please, would you?

He: [Walks over from the front porch, where the couple has been standing this whole time, toward the flowerpatch. Once he gets half-way there, he stops abruptly, and shouts.] Holy %$#@! That’s the biggest centipede I’ve ever seen!

She: KILL IT! KILL IT NOW!