People have been trying to figure out what intelligence is, and how it differs from person to person, for centuries. Much has been written on the subject, and some of this work has helped people. Unfortunately, much harm has been done as well. Consider, for example, the harm that has been done by those who have had such work tainted by racism, sexism, or some other form of “us and them” thinking. This model is an attempt to eliminate such extraneous factors, and focus on the essence of intelligence. It is necessary to start, therefore, with a clean slate (to the extent possible), and then try to figure out how intelligence works, which must begin with an analysis of what it is.

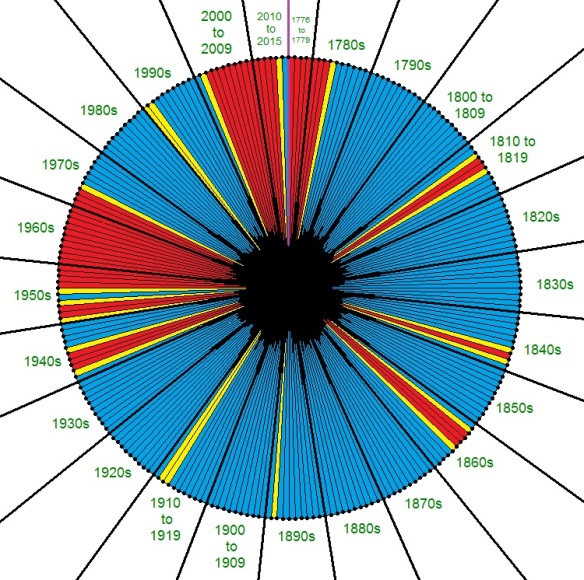

If two people have the same age — five years old, say — and a battery of tests have been thrown at them to see how much they know (the amount of knowledge at that age), on a wide variety of subjects, person A (represented by the blue curve) may be found to know more, at that age, than person B (represented by the red curve). At that age, one could argue that person A is smarter than person B. Young ages are found on the left side of the graph above, and the two people get older, over their lifespans, as the curves move toward the right side of the graph.

What causes person A to know more than person B, at that age? There can be numerous factors in play, but few will be determined by any conscious choices these two people made over their first five years of life. Person B, for example, might have been affected by toxic substances in utero, while person A had no such disadvantage. On the other hand, person A might simply have been encouraged by his or her parents to learn things, while person B suffered from parental neglect. At age five, schools are not yet likely to have had as much of an impact as other factors.

An important part of this model is the recognition that people change over time. Our circumstances change. Illnesses may come and go. Families move. Wars happen. Suppose that, during the next year, person B is lucky enough to get to enroll in a high-quality school, some distance from the area where these two people live. Person B, simply because he or she is human, does possess curiosity, and curiosity is the key to this model. Despite person B‘s slow start with learning, being in an environment where learning is encouraged works. This person begins to acquire knowledge at a faster rate. On the graph, this is represented by the red curve’s slope increasing. This person is now gaining knowledge at a much faster rate than before.

In the meantime, what is happening with person A? There could be many reasons why the slope of the blue curve decreases, and this decrease simply indicates that knowledge, for this person, is now being gained at a slower rate than before. It is tempting to leap to the assumption that person A is now going to a “bad” school, with teachers who, at best, encourage rote memorization, rather than actual understanding of anything. Could this explain the change in slope? Yes, it could, but so could many other factors. It is undeniable that teachers have an influence on learning, but teacher quality (however it is determined, which is no easy task) is only one factor among many. Encouraging the “blame the teacher” game is not the goal of this model; there are already plenty of others doing that.

Perhaps person A became ill, suffered a high fever, and sustained brain damage as a result. Perhaps he or she is suddenly orphaned, therefore losing a previous, positive influence. There are many other possible factors which could explain this child’s sudden decrease of slope of the blue “learning curve” shown above; our species has shown a talent for inventing horrible things to do to, well, our species. Among the worst of the nightmare scenarios is that, while person B is learning things, at a distant school, the area where person A still lives is plunged into civil war, and/or a genocide-attempt is launched against the ethnic group which person A belongs to, as the result of nothing more than an accident of birth, and the bigotry of others. Later in life, on the graph above, the two curves intersect; beyond that point, person B knows more than person A, despite person B‘s slow start. To give credit, or blame, to either of these people for this reversal would clearly be, at best, a severely incomplete approach.

At some point, of course, some people take the initiative to begin learning things on their own, becoming autodidacts, with high-slope learning curves. In other words, some people assume personal responsibility for their own learning. Most people do not. Few would be willing to pass such judgment on a child who is five or six years old, but what about a college student? What about a high school senior? What about children who have just turned thirteen years old? For that matter, what about someone my age, which is, as of this writing, 48? It seems that, the older a person is, the more likely we are to apply this “personal responsibility for learning” idea. Especially with adults, the human tendency to apply this idea to individuals may have beneficial results. That does not, however, guarantee that this idea is actually correct.

I must stop analyzing the graph above for now, because the best person for me to examine, at this point, in detail, is not on the graph above. He is, however the person I know better than anyone else: myself. I’ve been me now for over 48 years, and have been “doing math problems for fun” (as my blog’s header-cartoon puts it) for as long as I can remember. This is unusual, but, if I’m honest, I have to admit that there are inescapable and severe limits on the degree to which I can make a valid claim that I deserve credit for any of this. I did not select my parents, nor did I ask either of them to give me stacks of books about mathematics, as well as the mathematical sciences. They simply noticed that, when still young, I was curious about certain things, and provided me with resources I could use to start learning, early, at a rapid rate . . . and then I made this a habit, for, to me, learning is fun, if (and only if) the learning is in a field I find interesting. I had absolutely nothing to do with creating this situation. My parents had the money to buy those math books; not all children are as fortunate in this respect. Later still, I had the opportunity to attend an excellent high school, with an award-winning teacher of both chemistry and physics. To put it bluntly, I lucked out. As Sam Harris, the neuroscientist, has written, “You cannot make your own luck.”

At no point in my life have I managed to learn how to create my own luck, although I have certainly tried, so I have now reached the point where I must admit that, in this respect, Sam Harris is correct. For example, I am in college, again, working on a second master’s degree, but this would not be the case without many key factors simply falling into place. I didn’t create the Internet, and my coursework is being done on-line. I did not choose to be born in a nation with federal student loan programs, and such student loans are paying my tuition. I did not create the university I am attending, nor did I place professors there whose knowledge exceeds my own, regarding many things, thus creating a situation where I can learn from them. I did not choose to have Asperger’s Syndrome, especially not in a form which has given me many advantages, given that my “special interests” lie in mathematics and the mathematical sciences, which are the primary subjects I have taught, throughout my career as a high school teacher. The fact that I wish to be honest compels me to admit that I cannot take credit for any of this — not even the fact that I wish to be honest. I simply observed that lies create bad situations, especially when they are discovered, and so I began to try to avoid the negative consequences of lying, by breaking myself of that unhelpful habit.

The best we can do, in my opinion, is try to figure out what is really going on in various situations, and discern which factors help people learn at a faster rate, then try to increase the number of people influenced by these helpful factors, rather than harmful ones. To return to the graph above, we will improve the quality of life, for everyone, if we can figure out ways to increase the slope of people’s learning-curves. That slope could be called the learning coefficient, and it is simply the degree to which a person’s knowledge is changing over time, at any given point along that person’s learning-curve. This learning coefficient can change for anyone, at any age, for numerous reasons, a few of which were already described above. Learning coefficients therefore vary from person to person, and also within each person, at different times in an individual’s lifetime. This frequently-heard term “lifelong learning” translates, on such graphs, to keeping learning coefficients high throughout our lives. The blue and red curves on the graph above change slope only early in life, but such changes can, of course, occur at other ages, as well.

It is helpful to understand what factors can affect learning coefficients. Such factors include people’s families, health, schools and teachers, curiosity, opportunities (or lack thereof), wealth and income, government laws and policies, war and/or peace, and, of course, luck, often in the form of accidents of birth. Genetic factors, also, will be placed on this list by many people. I am not comfortable with such DNA-based arguments, and am not including them on this list, for that reason, but I am also willing to admit that this may be an error on my part. This is, of course, a partial list; anyone reading this is welcome to suggest other possible factors, as comments on this post.